Of the many technologies that are on the horizon, perhaps none has as much history as artificial intelligence. Although its academic origins are traced to the 1950s, appearances in science fiction throughout the past century have helped embed AI into the mainstream consciousness. These appearances also lead to heightened expectations—some technologists argue that type of intelligence in these systems is “assisted” or “augmented” rather than “artificial”—but recent advances in computing have certainly accelerated the potential of the technology.

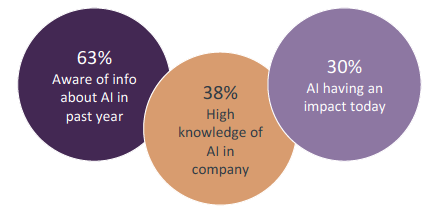

The familiarity around AI is reflected in relatively high numbers among businesses around awareness and projected impact. However, it is worth noting that “artificial intelligence” is actually the result of a collection of technologies. AI does not appear as a standalone item on Gartner’s popular Hype Cycle for Emerging Technologies; instead, contributing components such as machine learning, edge computing, and cognitive computing all have significant hype and are projected to become productive in 2-10 years.

Similarly, IDC broadly defines cognitive and artificial intelligence systems when building revenue projections, but there are still subtleties beneath that general classification.

In general, artificial intelligence is the practice of designing computer systems to make intelligent decisions based on context rather than direct input. It is important to understand that AI systems always behave according to rules that have been programmed. Consider a computer playing chess; this may not strike many people today as AI, but it certainly fits the definition of a system that has been given rules and calculates probabilities and decisions on the fly based on the moves of the opponent.

Today, AI is gaining hype and traction as capabilities approach something that looks like sentience. There are specific trends that have contributed to this situation, and these trends are the requisite components as companies build AI into their plans.

As far as revenue goes, IDC projects that $12.5 billion will be spent on AI systems in 2017, and that number will grow at 54.4% through 2020. However, IDC points out that this activity includes “intelligent applications based on cognitive computing, artificial intelligence, and deep learning.” In many cases, applications and IT components that companies already have in place will gain AI capabilities through upgrades, or new purchases focused on a business goal will have the added benefit of an AI underpinning.

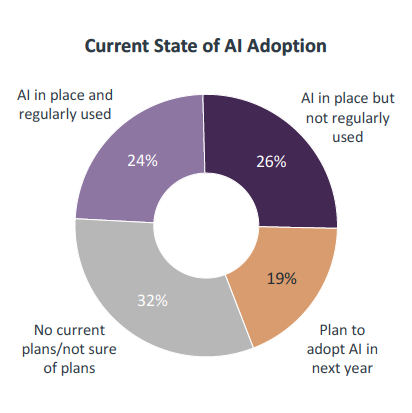

This reality is already present in the early stages of AI adoption. Among the 50% of companies that are aware of AI in place at their organization, there is recognition that the capabilities are built into existing tools and the challenge lies in utilization. In many cases, AI will not be a means unto itself, but rather a critical ingredient undergirding operations inside digital organizations.

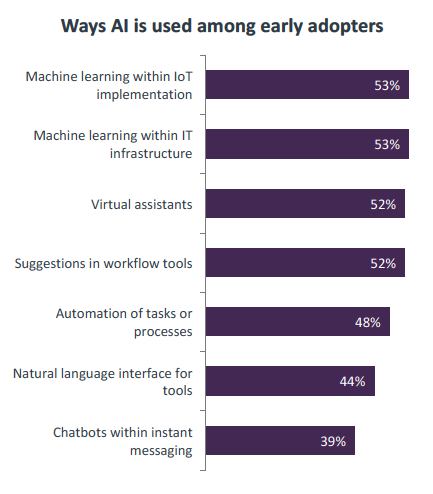

With a long history in popular culture and high-profile examples in the consumer space, many people have a skewed or limited perception of AI. While natural language interfaces or intelligent chatbots are certainly part of the AI ecosystem, they are among the least popular ways that businesses are currently utilizing new forms of machine intelligence.

It is no great surprise to see that AI in many cases is tied to another emerging technology—Internet of Things. The complexity of IoT systems practically demands some form of automation and network learning. While certain benefits of IoT can be gained from simpler implementations, large-scale systems will likely include AI as part of the solution.

At an even more basic level, AI is finding its way into standard parts of an IT architecture. Infrastructure components such as firewalls and routers are now enhanced with AI functionality, especially as software-defined networking becomes more prevalent. Even end-user applications are using AI to provide suggestions that can improve quality or usability. Again, AI may not be part of a new corporate initiative but may come in simply as tools are upgraded or introduced.

Finally, virtual assistants are gaining traction in the workplace. This could include tools specifically designed for business (such as Amy Ingram from x.ai) or mass-market products (such as Apple’s Siri or Amazon’s Alexa). In all cases, the software has a contextual understanding that allows information to be share and tasks to be automated.

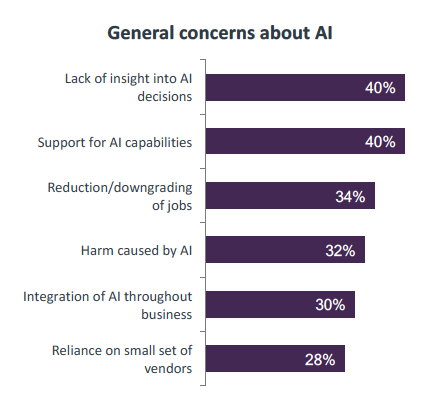

Whether or not companies have started to explore AI, there are definite concerns and challenges with implementing the technology. Topping the list is a fear that might be fueled by sci-fi depictions: a lack of insight into AI decision-making. This worry is higher outside the IT function—40% of executives and 44% of non-IT employees are concerned about this area compared to just 36% of IT workers. This is a good opportunity for technical specialists to lend their expertise to business discussions.

The tables turn when it comes to the second challenge. Consumer technology has helped create a perception that complex systems have minimal support requirements. Only 33% of executives are worried about supporting new AI capabilities. However, both business employees and non-IT employees are gaining an appreciation for the ongoing support needed for an expanded tech footprint; 43% of both groups are concerned about the proper level of support.

A final concern worth noting is the possible elimination of jobs as intelligent computers take over more and more workflow. In general, it is very hard to predict the exact impact of technology across the entire job market (although previous technical revolutions have consistently led to net gains).

CompTIA’s 2017 IT Industry Outlook references a study by McKinsey & Company on this topic. According to McKinsey, 60% of all occupations have some duties that could be automated to some degree. Of course, automating a subset of duties does not directly correlate to job elimination, but there is no doubt that some overall occupations are at risk as companies turn certain tasks over to AI. Most experts in the field, though, believe that the digital economy will feature new roles working in concert with intelligent systems.

Artificial intelligence comes with historical baggage and serious questions, but the potential is tremendous. Continued economic growth will require the deep insights that come from AI, but will also require the creativity and empathy that come from human beings connected in a global society.

This research brief is part of a larger study conducted by CompTIA on the awareness and application of emerging technology. Other topics in this series include blockchain, AR/VR, automation, drones, and the business implications of early-stage technology.

The quantitative study consisted of an online survey fielded to U.S. workforce professionals during October 2016. A total of 701 businesses based in The United States participated in the survey, yielding an overall margin of sampling error proxy at 95% the confidence of +/- 3.8 percentage points. Sampling error is larger for subgroups of the data.

As with any survey, the sampling error is only one source of possible error. While non-sampling error cannot be accurately calculated, precautionary steps were taken in all phases of the survey design, collection, and processing of the data to minimize its influence.

CompTIA is responsible for all content and analysis. Any questions regarding the study should be directed to CompTIA Research and Market Intelligence staff at [email protected].

CompTIA is a member of the market research industry’s Insights Association and adheres to its internationally respected Code of Standards.

The Computing Technology Industry Association (CompTIA) is a non-profit trade association serving as the voice of the information technology industry.

With approximately 2,000 member companies, 3,000 academic and training partners, 100,000-plus registered users and more than two million IT certifications issued, CompTIA is dedicated to advancing industry growth through educational programs, market research, networking events, professional certifications, and public policy advocacy.

CompTIA’s efforts to address issues related to emerging technology include member-led communities focused on businesses and IT professionals along with a policy group focused on legislation and regulations.

Read more about Technology Solutions.

Tags : Technology Solutions